Wordcount: 7411

Average reading time: 37 minutes

Date published: 3/7/2019

Follow Kip on Twitter: @KipBoyle

Steve: Kip, it’s great to have you on the Nonconformist Innovation Podcast today to talk about your new book, Fire Doesn’t Innovate, The Executive’s Practical Guide to Thriving in the Face of Evolving Cyber Risks. As you could imagine, the thing that jumped out at me is your use of the word innovation in your book’s title, and that’s why I reached out to talk about your book and explore this concept with you. There is an economy for cyber crime that is fueled by innovation just as much as business is today, and I look forward to diving into that conversation with you.

Another brilliant aspect of the book is in the sub-title, The Executive’s Practical Guide to Thriving in the Face of Evolving Cyber Risks. You have made the case that cybersecurity is not just a technology problem (it is a business problem) and you make the concepts of cyber risk, cybercrime, cyberwar et cetera accessible through the analogy of germ theory. Thinking of the cybersecurity risk in biological terms and that neglecting symptoms can have dire consequences should be a wakeup call to business leaders.

Kip: Yes, I hope it will make something that often seems abstract into something that will seem more concrete.

Steve: But going the other way too, thinking of things in abstraction, such as in metaphors can actually help us get a handle on things that are quite technical. Before getting too far into the book, your background is fascinating as well. You are a former CISO for both technology and financial services companies, formerly a cybersecurity consultant at SRI (the research arm of Stanford), have led global risk management for a $9 billion company and perhaps most interesting, a security director of wide area networks for the F-22 Raptor program.

So, Kip, the pleasure is mine. Welcome to the Nonconformist Innovation Podcast and thanks for taking time to be on the show.

Kip: You’re quite welcome, I’m happy to be here.

Steve: First things first, Kip, how does one get to be a security director for the F-22 Raptor? That is a dangerous, and expensive-looking piece of machinery that is, in fact, so dangerous it cannot be exported under American federal law.

Kip: Yes, that’s right, the F-22 is an absolutely fascinating technological marvel just about any way you look at it. The aircraft itself, depending how you look at it, it a computer with wings. There are many reasons why. When you look at the jet it is a very strange shape overall. It has very strange bumps and an unusual surface. It turns out that that was done for stealth and low-observability to keep it from easily being tracked by radar. The consequence of that is that the airframe is inherently not aerodynamic which means without computer assistance a human being cannot get it off the ground. It’s not possible to fly it by a human being. It has to have a computer assisting the human being the entire time in order for it to do its job. That’s just one facet of how exotic this machine is. Back when I was working on it around 1997, we didn’t even have the first production version of it. That first jet was coming down from production line about that time. It was really exciting because we didn’t even have first flight yet. We didn’t know if it would work spectacularly, be mediocre, or God-forbid is it going to crash the first time it takes off? It was just electric. The way that I got that job was I was on active duty. I was a Captain in the Air Force and I volunteered for it. I was just delighted when they chose me.

Steve: Awesome, I hope that’s one of the projects in the late 1990’s in where Privacy and Security by Design was a top-of-mind issue in the military (I’m not sure it was).

Kip: Oh yeah, especially for something like F-22. We had an intense focus on confidentiality for all the obvious reasons. We really don’t want this amazing innovative technology falling into the wrong hands. Sadly, though, it did end up falling into the wrong hands. The Chinese were very proud to roll their version of the F-22 out on the flight line years ago. It’s undisputable when you look at the Chinese stealth fighter and can see how strongly it resembles F-22. In that sense it was a big failure on the part of the US government to keep that information confidential. However, something we don’t talk about today, which was a huge concern of ours – possibility even more concerning than confidentiality – was the integrity of the code base.

As I said, the F-22 is really a flying computer. Well, the brains of the computer are about a million lines of software so clearly you don’t want anyone getting their hands on that. But, imagine this, which is maybe a worse scenario, what if someone could put something in there? What if the adversary could put a little bit of code in there and then hook that code up to a big red button in their control room? Then, when the F-22 comes to bomb you and take you out you just press on the red button. F-22 goes nose-down and accelerates and crashes. So, keeping things out of the code base was almost more important than protecting the code base from disclosure.

Steve: I think the similarities and parallels between privacy – the confidentiality and the security requirements of the F-22 are no coincidence when we are looking at the same needs in business today. You have this career spanning 20+ years and a broader perspective on the risk of IP theft, identity theft, financial fraud, corporate espionage, CEO extortion, targeted nation-state attacks on democracy and so forth. It’s a scary list. We’re reading about this today, when decades ago it may have sounded like science fiction. How much should executives and business leaders be worried about this happening to them and their businesses?

If you’re on the Internet you’re a target. It really isn’t any more complicated than that.

Kip: Cyber is a new place. We’re conducting commerce in that place. We’re having coffee and chats with friends in that place. We’re trading pictures of children and grand-children. And, guess what? The cyber criminals are here too and so are the cyber warriors. We’ve got cyber battalions – it’s militarized – it’s been turned into a platform for commerce. Everyone is connected to the Internet. It’s ubiquitous and more and more people are connecting to it. So, if you’re on the Internet you’re a target. It really isn’t any more complicated than that. That’s the answer to your question. If you have money – or something that someone can be used to create money – then they’re out to get you.

When I say “out to get you”, there are various shades of “out to get you”. On one hand they’re going to find targets of opportunity. They are scaling their attacks through automation. They’re searching the internet for easy targets, so if you’re an easy target you can get swept up that way. Think of it as a giant net that gets placed in the water and gets drug a few miles. You’re just trying to capture everything down there. You haul that net up to see what you got. You keep the good stuff and you throw the other stuff back. You don’t want to get caught in that net.

That’s one end. The other end is – if you’re someone of interest – and if you’re an executive of a company you ARE a person of interest then you could be targeted. It’s not just about targets of opportunity, but about selected targets. That’s where you start talking about CEO extortion, business email compromise, etc. That is the audience that I’m primarily talking to – a decision maker in an organization and how they deal with this. We’ve seen agriculture, home-town dental practices, charities… Just 3 months ago, a charity, Save the Children (you’ve probably seen their advertisements on television) revealed that cyber criminals stole a million dollars from them. Why would a charity helping children deserve to have a million dollars stolen from them? We may be able to justify theft from “evil insurance companies” or “big bad banks”…some people can look at a target and think, well they kind of got what they deserved, but really? Save the Children? What that essentially says is that our adversary (the people who are attacking us) are A-moral. You could argue that they are immoral, but they are like a germ. Germs don’t discriminate. I can get a cold, former President Barack Obama can get a cold, Vladimir Putin can get a cold. We’re all equally susceptible germs. That’s why I use the germ analogy because it’s so democratic. Everyone is at risk.

Steve: You’re onto a good point, Kip. I know you talk a lot about this in your book and elsewhere. I know you aren’t one to use FUD when discussing existential threats even with dire consequences, especially in part because CISOs are already numb to these scare tactics. How do you go about facilitating productive dialogue in the C-Suite and help organizations make progress with their risk management programs without putting your credibility at risk?

We stay away from fear. What we focus on instead is data.

Kip: You’re correct. It’s critical to not engage in a pattern of scare tactics. It absolutely won’t work and to do that puts your own credibility at risk. Executives can be criticized for a lot of things, but you will rarely criticize an executive because “they’re stupid”. They’re not stupid, they’re extremely smart. How they use their intelligence is questionable. Are they building their business for good, or evil, or whatever? We stay away from fear. What we focus on instead is data. There are a couple of different kinds of data that we focus on. One type of data is open source information that tells us what’s really going on in the world. For example, Save the Children and their million-dollar loss. We watch that because want to know the patterns of cyber-attack that are occurring these days. That’s a valuable source of data. The other type of data we gather is within our customer’s organizations. We interview the influencers in their organizations, the people that know the most about what’s going on. Those interviews are highly structured, and we create a data set from those interviews – actual numbers. By using that approach and gathering that data we can go back to the executives and look at the data together. Then we ask can this data help us make great risk decisions? Can this data help us with challenges of internally marketing the fact that we need to make some changes? For example, when is the last time you ran into problems because you told someone they needed more security? All the time! People don’t want more security. So, you’ve got to engage not only in risk decision making, and internal marketing, but you also have an external marketing burden that needs undertaking as well. Again, it’s based on data, and it’s about decision making and communicating.

Steve: I like how you frame it with a series of questions that are inquisitive to avoid any kind of confirmation bias. You’ve made it clear on your website that you’re not there to sell a program, product or service that your independence means a lot to you as a trusted advisor. If you frame this as an inquiry with a level of curiosity, you’re going to find the truth. Versus, if you’re a vendor with an unethical hidden agenda, your intentions are going to be discovered very quickly.

Kip: Inevitably if you have a hidden agenda, you’ll have to reveal it at some point. You may not be the one that reveals it, it may be revealed for you. That’s another thing about executive decision makers. They’re very interested to know that the data that they’re making their decisions on or the people they’re interacting with are as unbiased as possible. Or, if there is bias, they want to know what it is so that they can discount for it. The marketing machine that’s going on right now for trying to sell people cyber security solutions incredible. I can’t believe how much money is being spent just on marketing. Executives and decision makers are absolutely overwhelmed with marketing messages. All of them are biased inherently. And, if they’re not looking at that then they’re watching the newspaper or magazine headlines. And, all that stuff is biased too. It masquerades as objective reporting, but the fact that the news media tends to focus on sensational, odd events, tells you that you are looking at the long tale of what’s happening in the world and that is biased. It can scare you into thinking you’re susceptible to a bizarre cyber attack when the reality is you’ve just got some straight-forward meat-and-potatoes type stuff that needs to be dealt with.

SUBSCRIBE TO THE PODCAST

We will notify you about new episodes and important updates.

Nonconformist Innovation insights in your inbox.

Steve: Absolutely, this is a bit of a soap box issue for me. There are journalists out there that like to talk about data breaches and one of late, which happens to be a 3-year-old breach. The media picking it up and sensationalizing it has some folks in my circle a bit upset. The optics that are created around that – how to prioritize and treat that are a bit off-color.

Kip: I encourage the people that work with me to know that many of their sources contain a lot of bias and they’ve got to filter for that. Executives are decision-making machines. That’s what they get paid to do. I’ve been working with executives since 1992, helping decision-makers understand what they should they do about their cyber risks. What they really want is for someone to help tee-up a decision for them: set up 3 choices (A,B,C) and the pros and cons of each and then let them pull the trigger on one of them. That’s what they really want so that’s a big part of what we try to do – to facilitate their decision-making.

Steve: I like that. There are some adversaries that are getting paid $300,000 to go find vulnerabilities in executives. We’re talking about CEO extortion, which sounds like a Thomas Clancy novel. But this is real and even within vendors that are legitimate. I like how Jake Williams tweeted today about the RSA conferences next week. He puts vendors on notice by saying “Next week if you sales dweebs tell me that you use deep learning, AI, or machine learning but won’t explain how, I’m live tweeting all the fail with #vendorwordvomit”

Kip: Vendor Word Vomit, I love it. This has been going on for a long time. This idea that there’s gold in them thar hills and we’re going to spin up a company to go and get that gold. Whatever sales tactics or mechanisms we’ll do. Whatever it is to get there. I’ll give a plug right now. If you’re listening to this podcast and you’re trying to sell cyber security solutions, services products, etc. you should be listening to David Sparks CISO Vendor Relationship Podcast to get there.

Steve: Great podcast! I got a chance to listen to an episode yesterday. I would endorse and encourage listening to that as well. Coming back to the book now, I am intrigued by your central argument that fire doesn’t innovate, but cybercriminals do. In my own experience, I have had my fair share of underfunded security programs and fixed mindsets of security leaders (E.g. “This is my strategy for 2019, so I’m all set.”) Good enough usually isn’t, and certainly is not good enough for hackers. How well do you think the dynamic nature of cyber risk is understood by business leaders and executives today? I know all of your customers are decision making machines, but in general if they don’t have the privilege of having you as their trusted advisor, how well do you think business leaders understand this? Is it just me, or is apathy a major problem?

A checklist is a terrible tool when you’re trying to deal with dynamic risk because the risk won’t respect the checklist at all.

Kip: They don’t understand it well at all which is not necessarily their fault. We’ve got a human experience of dealing with static risks, fire being the perfect example. Fire doesn’t change. It’s a well-understood recipe. You take fuel, add oxygen and heat and you will get fire. If you don’t want fire anymore, you remove one or more of those ingredients and fire will go away. We figured this out, in the course of human history a long time ago. In the 1800s in the United States we lost major cities – huge portions of Chicago, Seattle, and San Francisco burnt to the ground because we were trying to use fire at scale, but we didn’t know how to do it. We ended up figuring it out though, because these days the risk of fire is pretty low. We know which building materials don’t burn very well and we’ve installed massive infrastructure to fight fire when it breaks out. We’ve got sprinkler systems, fire hydrants, and fire departments and we’ve even got fire insurance just in case it does get out of control financially speaking we can become whole again. Fire is a good example of risk and that’s what human beings are used to dealing with but cyber is not a static risk. We deal with executives that are accustomed to say “Where’s the checklist? If I have a great checklist, I can mitigate risk.” What they don’t understand is that it’s a static risk. They want a checklist, but a checklist is a terrible tool when you’re trying to deal with dynamic risk because the risk won’t respect the checklist at all. It’s like if fire said, “they’re using a lot of bricks now, I better learn how to burn bricks because if I can do that I can be way more successful at causing mayhem.” That’s why it’s not well understood and if you couple that with the never-ending marketing pitches and news media, as we talked about, you’re getting a stream of messages on a topic you don’t understand and that creates apathy.

Steve: I love it, and this is why I think the book is brilliant, Kip. I was drawn to your book because of this emphasis, and the parallels between nonconformist innovation and the asymmetrical nature of cyber threats. With US targets under constant cyber-attack, Shape Security did a study that said that 80-90% of e-commerce sites’ global login traffic reportedly attributed to credential stuffing attacks, the marked increase in zero-day exploits and use of AI by cybercriminals highlights the need for businesses to be more proactive and vigilant than they are today. Is reasonable cybersecurity reasonable? Can you help by putting some contours around what effective cyber risk management looks like in 2019?

Kip: It’s very different from what it looked like 5 years ago and especially 10 years ago. That term that you used reasonable cyber security, the Federal Trade Commission uses that term a lot. They’re trying to signal people conducting commerce in the United States that they need to be adhering to a different standard than they used to. Cyber is a highly adaptive, evolving, dynamic risk. For a long time, the paradigm for network and computer security was this idea that you’re going to build thick walls and have a castle mentality with the data having one way in and one way out and dump a bunch of money into prevention, that you’re going to be okay. That resembles the human experience of defending oneself. That’s where castles came from – the idea that if I put a thick wall between me and the attacker, I’ll be okay. The FTC is saying that doesn’t work anymore because you’ve got your quaint castle with the thick walls, but your adversary is flying drones over top of you and dropping grenades into your courtyard. It doesn’t work anymore.

Steve: Some things never change. Some people are always going to want to build walls.

You cannot in this day in age or in the future expect that you’re going to be able to prevent all major cyber attacks that come at you. You’ve got to be great at detecting them and responding to them as fast as possible.

Kip: It’s the human experience. What we understand is what feels safe. It’s in our DNA and our blood. We’ve got to realize that this is a different scenario. We’ve got to practice reasonable cyber security. Now that I’ve told you why and where reasonable cyber security comes from, let me tell you what it is. If you think about the NIST cyber security framework which the FTC points us to, there are 5 core functions that are reasonable cyber security. You need to first identify your assets and risks and threats. Second, you need to protect your assets against those threats. Third, detect those assets when you have an incident. Number four, be able to respond. And fifth, you’ve got to be able to recover. Those are five business functions, not technology functions. In today’s world an organization needs to be able to do all five of those contemporaneously and at a minimal or reasonable level of acceptability all the time. That’s what reasonable cyber security is. Underpinning all of this is the idea that you cannot in this day in age or in the future expect that you’re going to be able to prevent all major cyber attacks that come at you. You’ve got to be great at detecting them and responding to them as fast as possible. The data shows that this works. If you compare the cost that Target had to pay for its data breach to the cost that Home Depot had to pay, which were similar regarding scale and impact, Home Depot paid roughly half of what Target did. One of the reasons that Home Depot came out so much better is because they were practicing these five functions. They did a much better job at responding and recovering than did Target and that kept their costs under control. This isn’t just some abstract theory ‘What is reasonable in cyber security?’ We have data that shows that this is the way to be.

Steve: There is a debate. Common wisdom would say that compliance does not equal security. To a certain degree I agree with that.

Kip: Target and Home Depot were both PCI compliant when they were breached.

Steve: So, having compliance with the baseline of the NIST framework is table stakes.

Kip: It absolutely is. I was working recently with a biotech company that is a customer. I asked them what they were concerned about the most and they said, “we’ve got this intellectual property that we’ve spend 100s of millions of dollars developing and we’re going to commercialize it which will be the basis of our business and if we lose control of it, we might go out of business.” As a result of this they need to be much better at their ability to detect a cyber-attack than they must be at the other functions. You can’t be world-class at everything unless maybe you’re the NSA or Boeing or Lockheed. Perhaps if you’re a giant company with massive resources and you can declare world-class in everything, possibly. If you’re anything less than that you need to make tough choices about what you need to be great at. This company decided to be great at detecting. That would be their strategy over the long-haul for protecting themselves through reasonable cyber security.

Steve: That’s a good point. I’m asking myself what’s the gap between if the NIST guidelines are a good framework. If you’re having a workshop with executives what conclusions are you drawing? You’re not letting your executives leave concluding that NIST is a good framework, which in fact, it may be, but that’s not the key takeaway. It’s the need to define core competency based on business model becoming exceptional on certain areas.

Kip: Yes, the cyber security framework is a tool. Most people don’t buy a tool because they want a tool. They buy a tool because they want to go out and do something. Hammers and saws will let you do all kind of things. You could build a house, or you could build a chair to rest on your porch or you could build a toy for your kids. It’s just a tool. I encourage them to use the tool to wield it to realize what’s most important to them. Again, set those decision makers up for success and make priorities. If they’re focused on making decisions, I guarantee that they’re about prioritization because unlimited risks are coming at you and you have a limited budget.

Steve: Just yesterday on a podcast I was using the analogy of the security marketplace being a bit like the IKEA model. You have a lot of vendors, you have multiple cloud providers, multiple identity providers, multiple frameworks, heterogeneous computing environments, all of these things intending to do good, but there’s still assembly required.

Kip: Definitely, there is still assembly required. There are a lot of executives out there that are GREAT at putting the furniture together, probably better than most. But what they must decide is what the priority is on their time and can it be delegated. A lot of them have a hard time letting go of it. I continue to coach them. There are things that need to be done that only YOU can do, but the things that other people can do – let them do.

SUBSCRIBE TO THE PODCAST

We will notify you about new episodes and important updates.

Nonconformist Innovation insights in your inbox.

Steve: Coming to prioritization. In accepting your analogy of germ theory for understanding risk in the digital world, we know that early detection of disease is key to lower mortality rates, but so is following a hygiene regime. What are some of the top risks that businesses and executives need to be aware of, and what hygiene practices can help keep them and their systems protected?

You’re only going to get so far with technological defenses when the target is a human being. You need to do things to strengthen that human being so they’re not so susceptible to being emotionally manipulated.

Kip: This is an important issue that I help people understand. Just like the FTC says building a strong castle and hiding in it is not suitable strategy today and for the future. There are a lot of old thinking about where risks are coming from. Today, the major risk is phishing or social engineering. We’ve done such a good job in many respects in terms of protecting our networks that our adversaries don’t really attack our networks anymore unless you’re Mossack Fonseca, the Panamanian law firm that fumbled the Panama papers or unless you’re Equifax, which everyone knows suffered a huge breach. Both of those were breached because of horrible network cyber hygiene. They just weren’t patching their machines or putting in security updates, so the attackers just walked right through. Assuming you’re doing a good job with hygiene, the way attackers are getting in is through phishing. That’s not an attack on technology. That’s an attack on human emotion. It’s the oldest con in the world. It’s asking, how can I get greed or fear to rise up inside of you and hijack your brain? How can I get this reptilian brain that we all walk around with to push the keys on the keyboard to give the bad people what they want? That’s what the real risk is. In my book, that’s what I focus on. You’re only going to get so far with technological defenses when the target is a human being. You need to do things to strengthen that human being so they’re not so susceptible to being emotionally manipulated. If they are emotionally manipulated, have things in place that will dampen or prevent them from doing something bad.

The classic case is a business-email compromise. That’s when someone sends an email to someone in Accounts Payable that says, for example “Hi, I’m Joe, I’m the CEO, I’m on a long trip and can’t be disturbed, but real fast I want you to wire 25 million dollars to a brand new supplier, I just put a deal together in the far east. Here’s all the information, so please do this as fast as you can our business depends on it.” And, this Accounts Payable person desperately wants the approval of the CEO and wants to show worth and they fall for it and they move the money. You should have a two-person rule, for example, that says no one person can move $25,000,000 on the strength of a single terse email. There must be processes in place to help compensate for when people get emotionally hijacked.

Steve: That’s a very valid point. There’s also research that says that 1 in 5 employees would sell their work password.

Kip: That’s right. Have you ever heard of someone getting fired or disciplined for cyber security lapses? It’s very rare these days. It’s even rarer in the past. There’s no skin in the game, so why wouldn’t they sell it? The consequences of cyber crime are not felt by the person who has been compromised. It’s a very weird aspect of the time that we live in, where a cyber crime that involves you, doesn’t really affect you. Unlike walking down a dark street and getting your purse or wallet stolen. You may get hit and it might hurt. Then you may have to go home and explain to your family that you did something dumb and may be ashamed as a victim because someone took advantage of you. It’s very different when someone buys your password from you for a candy bar. What could go wrong? And if it does who will bare the cost? Where will the consequence fall? It’s not going to fall on me. How would anyone even know that the password came from me?

Steve: There’s always the possibility that they bought all your company’s passwords off the dark web. There’s the human element, and often hackers are coming right through the front door with known credentials.

Kip: That’s absolutely correct. We have to do things to help people stay far from that by, for example, giving them a high-quality password manager. The reason why credential stuffing works is because people are using the same user names and passwords in multiple sites. I’ve found that if you’ve been on the genealogy site and you’re using the same login information that you’ve used over at your brokerage firm that manages your 401K plan, one former would be easier to tip over and then can be used where the action is and take your money. A high-quality password manager will automate something that humans are not great at doing without assistance. You need a different password at every site that you use, and that password better be 16-20 characters long. No human being can do that without assistance. That’s why I advocate for people using a password manager. I tell executives – you set the tone for your team. If you’re using a password manager, they are more likely to use one. If you tell them to use one, but can’t be bothered to do it, you’re whistling in the wind.

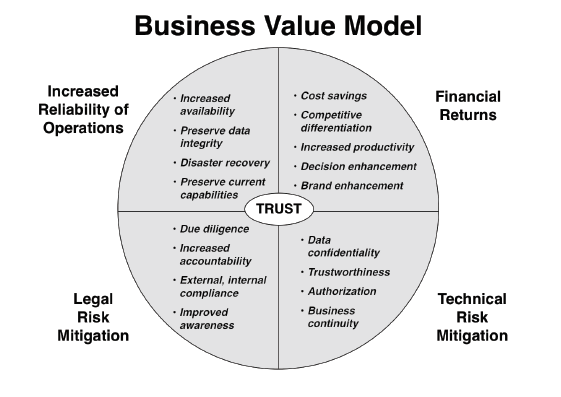

Steve: Good point, we could probably spend a whole day talking about protection. You developed a nice chart that highlights the business value of improving cybersecurity. In my experience, making a business case for the ROI of cybersecurity alone is very difficult. Answering that question, “How much less risk will we have after spending the millions of dollars you are asking for?” is a quantification exercise that seems more of an art than science. What approach has worked for you, and have you observed one approach that is more effective than others being used in the organizations you work with?

Buy the book: Fire Doesn’t Innovate, The Executive’s Practical Guide to Thriving in the Face of Evolving Cyber Risks

Kip: In part 2 of my book I present a step-by-step approach for doing a risk assessment. When I was helping advise executives, I tried everything I could to help tee-up great decisions for them to help them understand how they should spend their money. It started with low, medium, high; red, yellow, green tools. Those were not very satisfying. I’d spend 6 weeks trying to put something together and my executive would say, “that’s very interesting but I could have done that on the back of a napkin at the bar in 20 minutes and come really close to what you have.” So, that wasn’t such a hot idea for executives. Then, I went to the other extreme and started doing quantitative work where I was using statistics and Monte Carlo simulations. It’s a wonderful system. FAIR is an example of a quantitative approach to measuring cyber risks or any risk. It tries to figure if the risk is worth $100,000 can I spend $10,000 and reduce that risk to something very low? What I found with that is most executives don’t have the patience for it unless they are already a quantitatively minded person, or they personally decide that they’ll invest the time to understand it. I’ve found it doesn’t work very well for executives particularly in the middle market. If you’re an organization with less than 1 Billion in revenue those systems don’t tend to work as well. I spent a lot of time working in the middle market and what I’ve found is there is nothing in between red, yellow, green and Monte Carlo simulations that would help executives make decisions. That’s why we do the work the way we do it. It is designed specifically for the executives in middle market companies.

If you have a data breach you’re going to want to show up in the court and have a compelling story to tell about how you weren’t negligent, and you did act reasonably and responsibly.

In terms of making the ROI for cyber security. The idea that you can get money back for money spent, sometimes that comes up. I would say a password manager is a great example of that because I’m automating something and reducing someone’s work-load. I’m getting increased productivity and increased security at the same time which is kind of rare. However, there are other business benefits for spending money on cyber security like increased reliability of your company’s operations. If you can keep your systems from going down, you won’t have as many losses due to system failure. There’s also the decreased risk that you’ll get into legal trouble if you ever do get charged with negligence. If you have a data breach you’re going to want to show up in the court and have a compelling story to tell about how you weren’t negligent, and you did act reasonably and responsibly. We bring all 4 of those benefit areas into play and put them all on the table so that we can draw on any of them. That helps justify, not only the spend, but also creates a basis for communicating about them. Internal and external marketing of cyber security is very hard. Anything you can do to make it easier is a good thing.

Steve: In reviewing your checklist, you propose some simple formulas for figuring out how much to spend on cyber security. My thought was, this seems so easy to understand and useful.

Kip: Good, that’s the goal!

Steve: I didn’t have to figure out some complicated system. I conceptually understand the quantification movement, having that said, it’s more of an art than a science. Getting at those numbers to come up with a model that is accurate seems like a lot of hard work.

Kip: It is hard work. There are some enterprises who do it and they do it very well and it works well for them and I would never suggest that they stop doing it. However, if you’re an organization who isn’t doing it and it isn’t a good fit for you, what’s the alternative? Up until now it has been red, yellow, green; high, medium, low. It’s not sophisticated enough. We wanted something very practical, and simple, but not simplistic. You want to keep things simple, but if you get too simple, you’re back to the red, yellow, green; high, medium, low.

Steve: One of the defining characteristics of nonconformist innovation is being a catalyst for change. Our world depends on nonconformity to push boundaries, ask the difficult questions, create new markets, develop new products for existing markets and deliver differentiated value. In your book, you talk about how DHL turned cyber resilience into a competitive advantage. Can you explain how that works, exactly? And if I can put you in the hot seat for this question, imagine talking to the CFO of your next big client when answering this question. How do you translate abstract competitive advantage into something that is measurable?

Kip: I don’t think I would ever suggest spending money on a cyber security program simply to try to create a competitive differentiator. What I would say to executives is – that is going to be a business value that you will accrue when the day comes that you are attacked, or you have a massive cyber failure. We must expect that everyone’s going to have that ever so often because the digital world is so dangerous. In the book I talk about DHL and FedEx. In Europe, FedEx has a small package delivery system, called TNT. TNT and DHL are competitors in Europe. A few years ago, there was a piece of virulent, malicious code called NotPetya. NotPetya was released in the Ukraine and had a much larger effect than the creators of that malicious code expected. It did about 10 Billion Dollars of hard economic damage. DHL and FedEx TNT were both hit by this worm. People thought it was ransom-ware because it displayed a screen that said it was ransom-ware: pay money and you’ll get your data back. The reality was if you paid money you wouldn’t get your data back because that was a rouse. The true purpose of NotPetya was to get onto that computer and destroy it. To render all that data on that machine unusable. So, all these companies had to throw away all the laptops, stations and servers.

Both DHL and FedEx got hit, but FedEx TNT had to shut their doors. They suffered such a data failure that they didn’t know where all the packages in their care were. They were stacked up in warehouses all over the place. They couldn’t take new shipments, they couldn’t deliver the packages they had because in today’s world if you can’t move data you can’t move freight. That’s how dependent we are on data. DHL never shut their doors, even though they got hit. Imagine you’re a person in Europe with a small business and you want to send a package. You used to use TNT but now they won’t come and take your package. What do you do? You go to DHL because they’re taking and delivering packages. You switch. Imagine what DHL would have to do from a business perspective to encourage a TNT customer to switch. They’d have to run a sale or give them something tangible. Because of this DHL didn’t have to do that. They just sat and benefitted from customer defections. What’s great about this example is that you can go look at their financials because they’re both publicly traded companies. You can see that FedEx TNT took a $300,000,000 hit because of NotPetya. Not only did DHL not take a hit, but their package shipments, revenues, and volumes went up and are staying up. That is a fantastic story about how cyber-resilience can turn into a competitive advantage.

Steve: In the book you talk about the dashboards you create for your customers, which give executives prioritized lists. They can see the primary benefit, which you might call the mandatory requirements and the secondary are optional business benefits along with your formula you call 3TCO. Would you argue that DHL was not only going after the mandatory primary benefits but the secondary unrequired benefits such as resiliency and ensuring the integrity of our customer data and the customer experience. Is that the difference?

Are you treating this as some nasty little thing that you must do, or are you looking at it as an absolute requirement if I’m going to be on the Internet today doing business?

Kip: I would characterize it a little differently. People who have a compliance mentality about cyber security are going to end up more like FedEx TNT. They’re checking the boxes and doing the minimums. They should be thinking about it like – I am at risk because I use the Internet and I need to make sure, no matter what comes down the “information super highway”, I need to stay resilient. If I’m thinking that way, I’ll think more, smarter, different than if my goal is just to comply. I think that’s the biggest difference that I see. Are you treating this as some nasty little thing that you must do, or are you looking at it as an absolute requirement if I’m going to be on the Internet today doing business?

Steve: Those are some key thoughts, Kip. When we’re talking about what do we have to do and what we should do when it comes to cyber resilience, what recommendations can you offer to executives and other leaders to help them turn nonconformist innovation and cyber resilience into a competitive advantage for their business?

Kip: The reason I put innovation in the title of my book is because fire can’t innovate. It’s static. If we manage our businesses in a static way, then we’re doomed to be trapped in this paradigm of not knowing how to deal with our cyber risks. The opportunity to be innovative here is to stop thinking of cyber risk as a technology problem and start thinking of it as a business problem. Elevate it to the same level of care that you would risks to sales, risks to order fulfillment, and Accounts Receivable. Failures in any of those areas long-established business functions can put you out of business or greatly impair your ability to grow. Cyber has become that. That’s my encouragement to executives – see it as a business problem. When you do that, you can bring way more to the table to deal with it than you could thinking about it in the old way. If you think about it as a business problem, then what do you have? Well, I could use some technology – great you had that in the previous paradigm, what else? I can start to think about how to bring people into this and think about how to change our processes. I can think about how to manage differently or better. Now, you’re bringing a four-cylinder engine to the job, whereas, you had a one-cylinder engine before.

Steve: I love it. At a conference a few years ago, I ran into a former colleague of mine who was a cyber security director for incident response at one of the large companies that I’ve worked for. He turned me down for a conversation and informed me that his budgets were fixed, and he was locked and loaded for the new year and that they already had their plans set. I just walked away from that conversation thinking “WTF?”. He’s locked and loaded? His plans are set? I love how in your book you recommend check-ins weekly, monthly, and quarterly to ask how are we doing, and do we need to make mid-course corrections? I just think that’s brilliant.

Kip: The adversary is not sitting around thinking, well, we’d really love to run these different attacks, but our tech strategy is locked and loaded for the year, so I guess we’ll just have to run this one out. Gosh, it’d be great if I could use these new attack tools, but the boss will never go for that! It’s not what they’re doing.

Steve: I’ve really enjoyed our conversation. One final question. Where would the listeners go to learn more about your new book and how can they get in touch with you if they want to learn more and figure out what their dashboard and 3TCO look like?

Kip: I’m happy to have a conversation with anyone about this. I hope it’s obvious that it’s an area I do well and enjoy being in. If you want to look at my book you can get the first chapter of it for free at www.firedoesntinnovate.com. If you like it, you can get it from Amazon or Barnes and Noble. Pretty soon it will be available on Apple iBooks and elsewhere. If you want to chat with me, I’m on Twitter @kipboyle. I’m also hanging around on LinkedIn, just type my name into the search box. I think I’m the only Kip Boyle in the entire LinkedIn ecosystem so it should be easy to find me, so come find me.

Steve: Sounds good and easy, which is a good way to have it. Kip, thanks so much for your time. I hope we can do this again.

Kip: You bet, man, thanks for having me here.